The same AI that predicts the next word in a sentence can predict next week's sales. Without ever seeing your data before.

A warehouse full of product that is not moving. A stockout on the one SKU that matters this week. A planning team that spends more time adjusting the forecast than acting on it.

This is the state of demand forecasting at most mid-size companies. The numbers are wrong, everyone knows they are wrong, and fixing them requires either better spreadsheets or hiring someone who can build a statistical model. Both are slow.

Here is what we did not expect: large language models — the technology behind ChatGPT — turn out to be surprisingly good at this.

Not because anyone designed them for demand forecasting. Because demand data has exactly the kind of structure that LLMs are built to recognise.

Think about what weekly sales data actually looks like. Repeating cycles. Trends that drift upward or downward. Predictable dips around holidays. Spikes around promotions. These are patterns. Pattern recognition is what language models do better than almost anything else.

Researchers at NYU and CMU showed this at NeurIPS 2023. Encode time series as a string of numbers, ask the LLM to predict what comes next, and it matches or beats purpose-built forecasting methods — ARIMA, N-BEATS, models that were specifically trained on the target data. The LLM had never seen the dataset. Zero-shot.

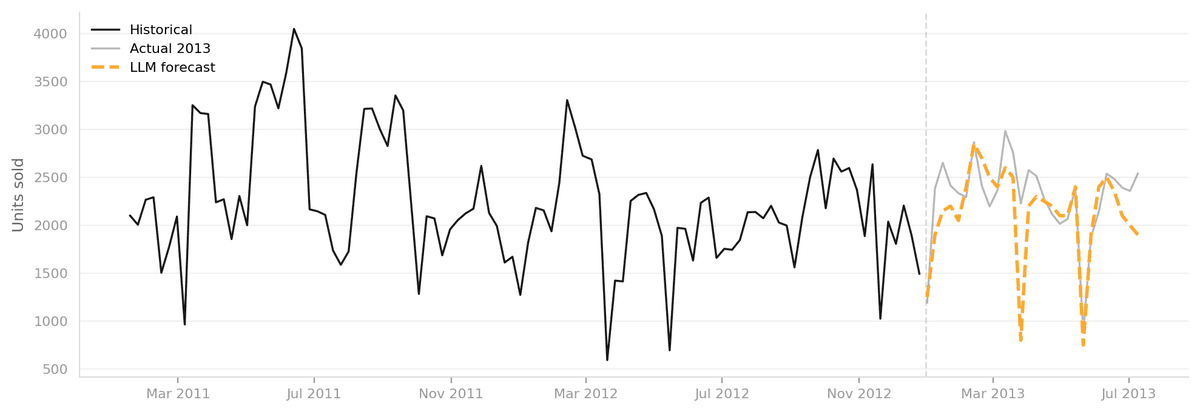

We tested this ourselves. Two years of weekly retail sales data — 2011 and 2012 — for a single product category. We asked an LLM to forecast the next 28 weeks of 2013. No fine-tuning. No feature engineering. Just the raw numbers and a knowledge of national holidays.

Amber is the forecast. Grey is what actually happened.

The model picked up the seasonal rhythm, the general demand level, and even the sharp dips around Easter and the late May bank holiday. Those same dips were in the history. The LLM found them.

This is not a replacement for a production forecasting system. But it changes the starting point. Instead of weeks of data cleaning and model training before you know if forecasting is even viable for your problem, you get a credible first answer in hours. We call this the zero-shot baseline — a fast, cheap way to find out whether your data has signal before you commit to a full build.

For a supply chain team, that is a meaningful shift. You can test viability before committing budget. You can get directional answers on new product categories where you do not have years of history. The barrier to data-driven planning just dropped.

The teams that figure this out will plan better, carry less dead inventory, and react faster when the market moves. The ones that do not will keep adjusting last year's spreadsheet.